Edge AI. Under control.

LGN’s edge AI management software puts you in complete technical and financial control of your edge AI.

This allows you to scale out edge AI products without exploding your costs.

Orchestration framework for fleet scale edge AI.

Neuroform is an cloud native framework for orchestrating fleet scale edge AI that extends cloud to the edge and is built on top of Geoform™.

Manage the deployment of edge AI at fleet scale

- harness the best available enterprise class orchestration frameworks for AI and IoT

- manage AI/ML model deployment across large fleets of edge devices

Monitor and improve real world model performance

- monitor how your models perform when exposed to real world data

- gather relevant data and use it to retrain and update models over time

Reduce transfer, storage and processing costs

- optimise data selection trade-offs to reduce the data you need to transfer and process

- without sacrificing visibility, insight and learning speed

Extended for fleet scale, edge AI.

Neuroform extends this ecosystem with a solution that provide fleet management, a supervised edge device runtime and configurable workflows for data selection and re-training

Data

Processing

Machine learning

Deployment

Dashboard

Services

Runtime

Fleet

Fleet

Dashboard

Services

Runtime

Fleet

Edge hardware

Orchestration

Edge hardware

Fleet scale resource management

Custom Fleet resource that allows you to configure model deployment, supervision and data selection strategies.

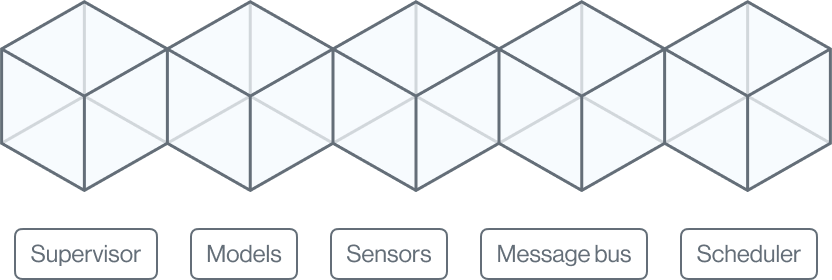

Supervised edge device runtime

Docker based runtime with scheduler client, supervisor and MQTT broker for message bus based sensor integration.

Data selection and re-training

Configure data selection, transfer and storage strategies and Kubeflow re- training workflows.

Dashboard, monitoring and alerts

Monitor your edge AI fleet in real time and get alerts when model performance drops below thresholds that you configure.

Automated Version Control and Rollbacks

Neuroform offers automated version control and rollbacks, ensuring that deployed models are always running the most accurate and up-to-date version.

Streamlining and Optimizing the Deployment Process

Neuroform streamlines and optimizes the deployment process for AI models at the edge, reducing deployment time and costs.

Cost Savings and Faster Deployment

Neuroform's existing users are saving 90% of their operational costs and deploying into new markets 30x faster than ever before due to savings in bandwidth, cloud bills and labelling.

Built-in Security Measures

Neuroform includes built-in security measures to protect your models and data, helping to keep your AI models safe and secure.

Get in touch

Contact LGN now to find out more about our edge AI products and solutions.

Contact us Email sales@lgn.ai